Scenarios in cybersecurity

Cybersecurity leaders have always used various scenario planning methods to improve the ways our teams work. The most common examples of this are tabletop exercises, which most teams do on a regular basis to practice incident response processes and improve effectiveness.

The value of these tabletops is in everyone involved actually going through a realistic incident scenario, having to think ahead of time how they would deal with it, and learning to make sound decisions in response to various injects.

These methods work. Just ahead of COVID, the leader of physical security in my organization decided to practice a global pandemic tabletop exercise. Their scenario was a more benign outbreak of an existing disease, but we learned a lot about how to optimize for large-scale absences and work from home, and were able to apply this just a few months later during the actual COVID pandemic.

We can’t predict the future, but learning organizations forecast what plausible scenarios look like, so they are prepared to make good decisions in the moment.

Now imagine we had a tool that allowed us to build very detailed views of how very hard, complex issue areas may evolve over a longer timeframe. Areas where we don’t have a lot of direct control. I’ll introduce one in this blog.

A college discovery

Structured foresight itself is not a new activity. It dates back all the way to the 1950s work of Herman Kahn, the author of On Thermonuclear War, when he was at the RAND Corporation. There he was one of the first to develop “scenarios” that helped evaluate nuclear war strategies with the Soviet Union. He would be the one to introduce concepts such as the escalation ladder, and the Doomsday Machine, which still influence our way of thinking about nuclear war.

Shell, facing growing uncertainty in global oil markets, built on his approach in the late 1960s and early 1970s — and famously used it to anticipate the 1973 oil crisis. By building scenarios of the future, they anticipated that the common forecasts of continued growth had their limitations, and were able to diversify their product and pricing structure ahead of the major shock. “Shell scenarios” are still a well studied example of foresight development, covered in a wide swath of business books and articles.

As a young cybersecurity professional, I myself was a big fan of two things that appeared to be contradictions: rational facts-based analysis and intuition. I loved data: collecting it, analyzing it, understanding it. But I loved even more working with really talented leaders that showed me the limitations of that data, and how they made purposeful, gut-driven decisions that better protected users of their products. Sometimes aligned with the data, and most times carefully accounting for it, but deciding differently. I wondered how that seeming incongruence could work.

In 2006, while in graduate school, I read a 1974 paper that would thoroughly shift my perspective. It was Russell Rhyne’s “Technological Forecasting Within Alternative Whole Futures Projections”, in which he introduced Field Anomaly Relaxation (FAR) as a method of predicting possible futures in a particular field.

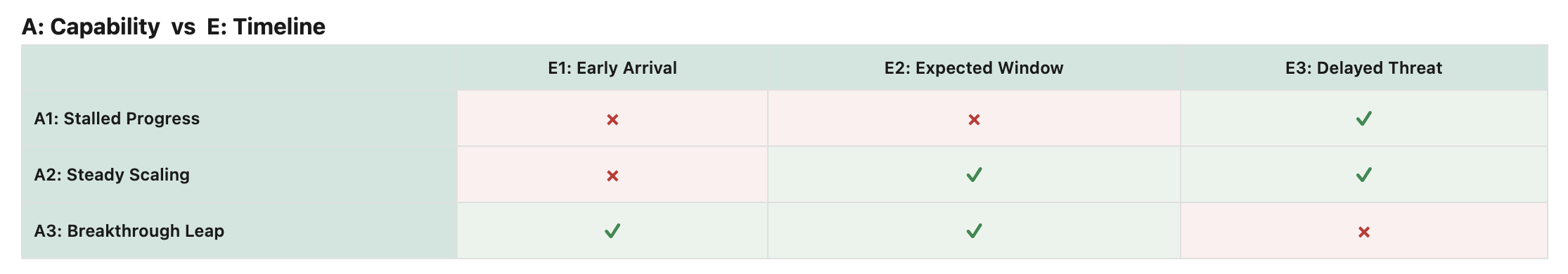

FAR is based on general morphological analysis, developed by Swiss astronomer Fritz Zwicky. Zwicky asserted that when faced with specific “wicked” problems — those that are hard to quantify and solve and where simple causal “A to B” solutions fail due to the wide set of parameters — one can still generate a matrix of possible futures and how those futures are influenced by specific causes.

Zwicky then suggests we can drastically reduce the potential set of viable futures by comparing each individual parameter against each other, and assessing whether they can be true together in a viable future. This “cross-consistency assessment” rapidly reduces the possible set of solutions, without having to reduce the amount of input variables. Don’t simplify the problem, simplify the likely futures.

Rhyne combined this with Kurt Lewin’s Social Field Theory which states that behavior is a result of both the individual and the environment, and makes the “configuration” of that environment a central part of the foresight process.

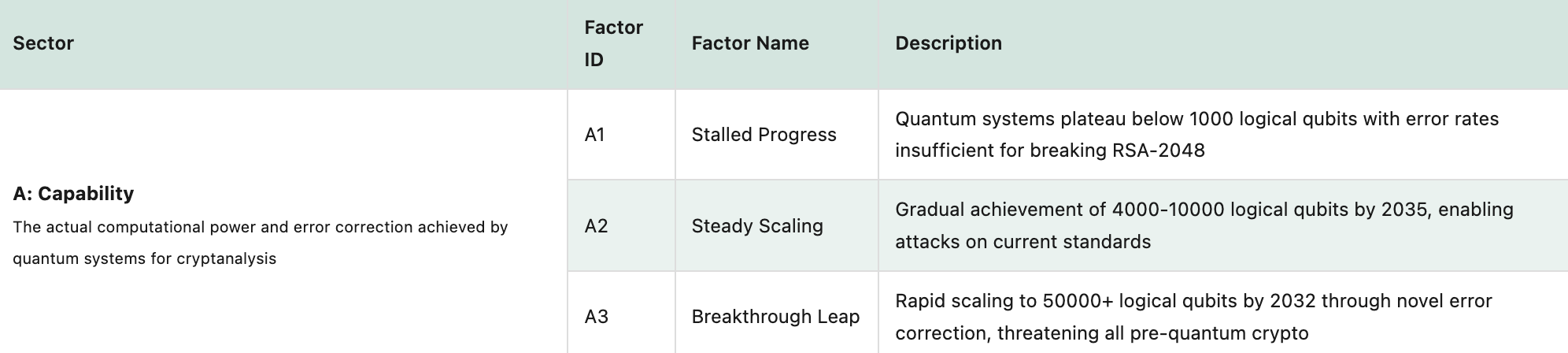

Put simply, his new technique develops a broad view of the “field” you are studying by defining “sectors”, and for each individual “factors”, which represent the sector’s level of intensity and influence. For instance, when studying the field of “potential for revolution” in a society, you may look at “regime strength” as a sector, and “pervasive” or “low” as factors; and “media ownership” as a second sector, with “state-owned” and “fully commercial” as factors.

FAR combines both by asking you to develop the whole field by creating a grid that has each possible combination of factors. It then asks you to cross-compare for consistency: look at every possible combination of states and flag the anomalies: those combinations that are unlikely or impossible. In a specific culture, fully “pervasive regime strength” may not be compatible with “private sector control of media”. By doing this exercise, we can eradicate a large number of possible futures.

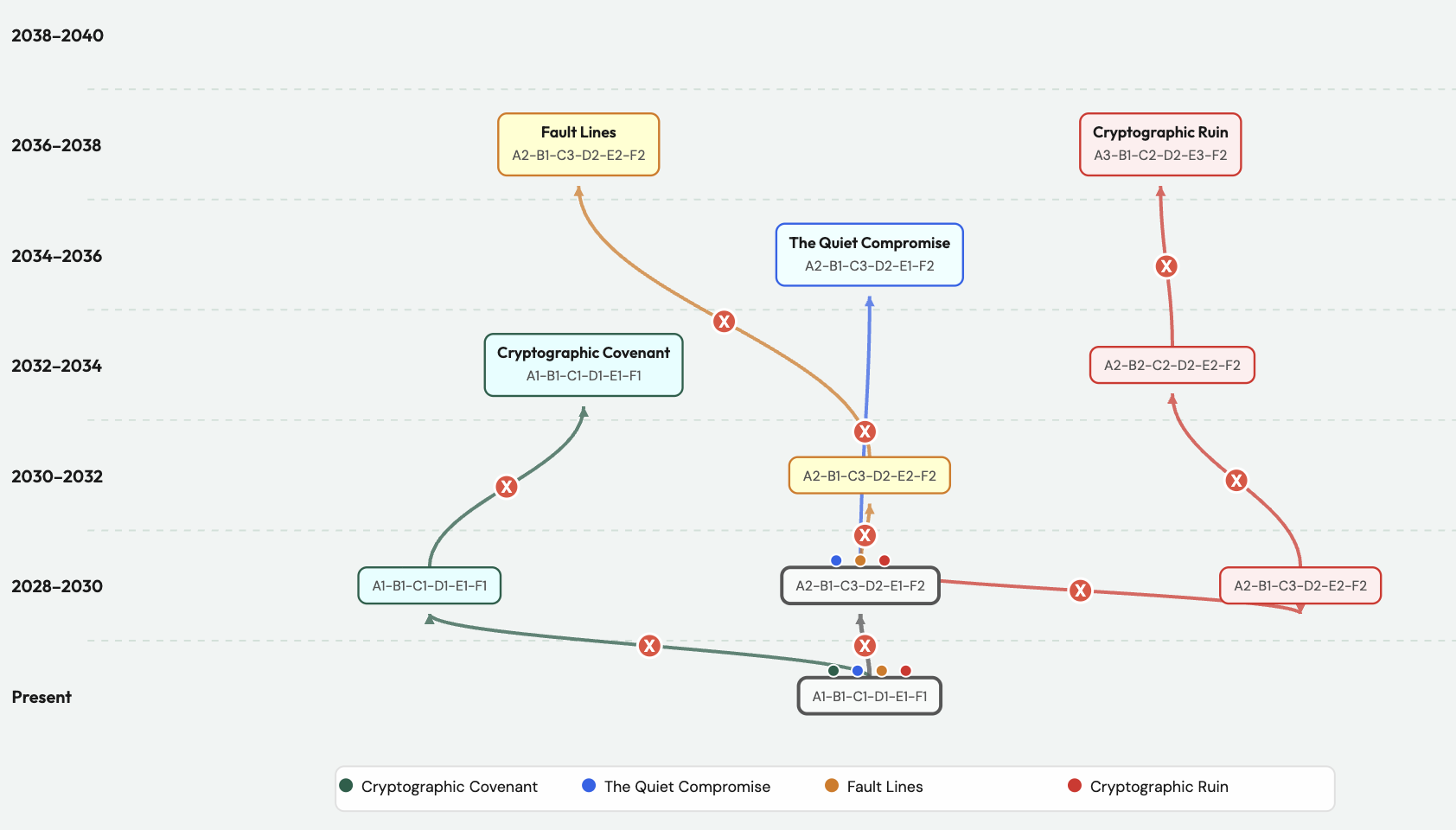

FAR then prescribes that we look for the remaining surviving “configurations”: combinations of factors, and map them along a “Faustian tree”. This is a line that identifies what logical paths may exist from today’s reality, to a future scenario.

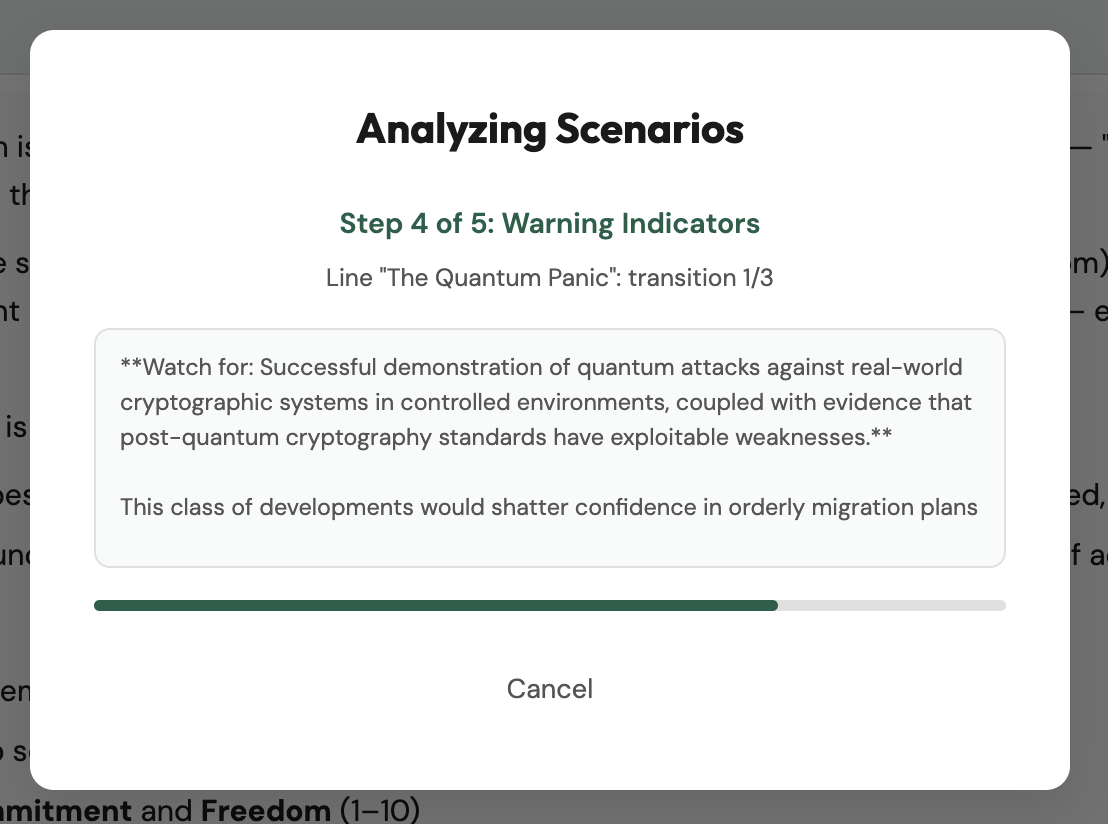

An example of this is below: the lines culminate in a scenario, but trace through various configuration changes to get there. Rhyne refers to the tree as “Faustian” as it originally incorporated a reflection on the human desire and ability to make change, but I most commonly refer to them as scenario lines. For now, ignore the red X marks, which are “warning indicators”. We’ll get to those in the next section.

Field Anomaly Relaxation is especially helpful for the analysis of long-term problems and societal state changes. It’s a process that uses experts to develop a deep understanding of the potential futures, and then allows those experts to more rapidly reduce the solutions available, so we can more easily interact with the problem and make better choices.

Rhyne himself used his model, in various stages of development, on areas such as Indonesia’s sea-sovereignty and corporate planning. The Australian Defence Science and Technology Organization (DSTO) has used it to assess potential future urban states, and others have seen it valuable to aid in smart grid development. Coyle and Yong also validated the approach in an attempt to understand potential futures for the South China sea.

Outside of its value in wicked problems, there’s an underexplored area of FAR that I think makes it a unique fit for cybersecurity work.

Indications and Warning

The clearest use case for it is in threat intelligence, and in particular in support of “early warning”, the component of this discipline tasked with giving us early notice of a crisis. As FAR helps us develop scenarios of the future, we can ask a specific question at every transition: which combination of sectors and factors had to change to get us there? I’ll call that change a “trigger”.

If we understand ahead of time that a trigger opens the door to a potential set of futures, we now know that transition is important to monitor for. We can set the right intelligence collection requirements so we will have early warning of when a factor changes.

Much of cybersecurity’s approach to threat intelligence has been focused on tactical warning — we see an indicator, so we know a compromise may be imminent.

FAR can allow us to have a much richer understanding of how threats will develop and move us from the “tactical” warning above, to “strategic” warning, the area of focus of Cynthia M. Grabo. As she stated in her classic early warning book, “Anticipating Surprise: Analysis for Strategic Warning” published in declassified form in 2002: “Warning is an intangible, an abstraction, a theory, a deduction, a perception, a belief … it can be neither confirmed nor refuted until it is too late”. FAR allows us to identify indicators for strategic shifts.

Take post-quantum cryptography as an example. A sudden drop in the number of qubits required for a working quantum computer doesn’t mean the threat is imminent, but it makes the future where quantum computers break existing cryptography meaningfully more credible. That kind of signal is exactly what FAR helps us watch for. We can task our threat intelligence team to follow less mainstream academic research (such as preprints and specialist cryptography publications), and treat a shift like this as an early indicator that efforts to deploy quantum-resilient cryptography may need to accelerate.

Putting Field Anomaly Relaxation to use

I first tried to use Field Anomaly Relaxation during college, to try and understand the potential for revolution in a given society. But throughout my career I’ve applied its principles wherever I needed some method of foresight.

Distilled to its core questions, FAR has helped me think through what activities we might see from an adversary, or how a thorny security problem may evolve. It never provides an “answer” or “prediction”, but a set of potential futures I can then match against real changes in adversary behavior, or against new security issues as I learn about them.

Recently, I thought it would be helpful to have a tool that would allow me to experiment with this a little bit more, and perhaps even introduce LLMs as a way of providing some additional creative prompting. With support from Claude Code and Gemini, I built a small research prototype. Given the richness of Rhyne’s work, there are many details that can be improved, but it’s functional and has been fun to play with. You can find the FAR analysis tool, and the source code on GitHub.

The way it works is simple, and best done in a brainstorming session with a group of experts.

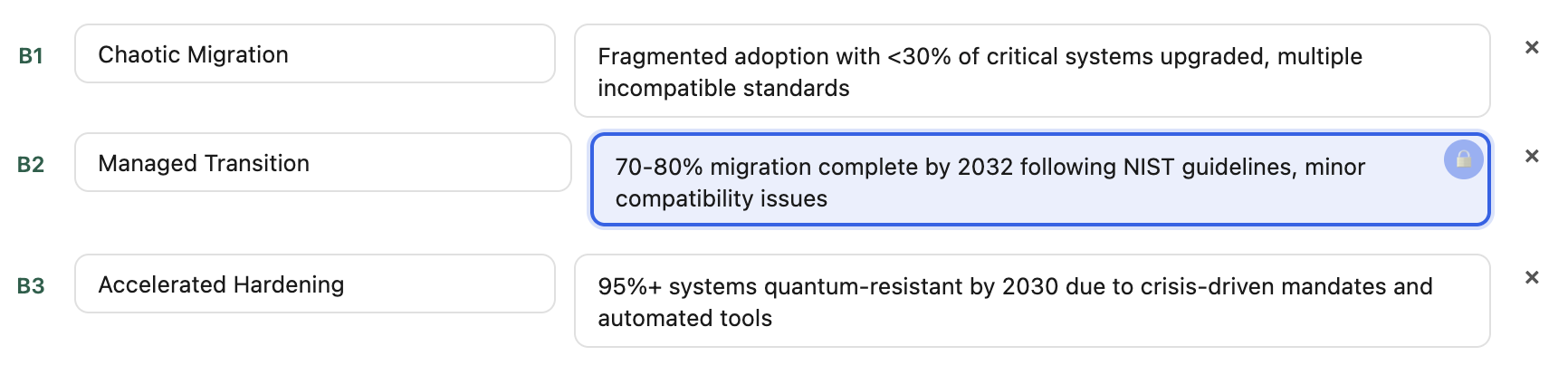

You begin by describing some initial ideas you have on the field, boundaries and key uncertainties. Next, you identify 6–7 of your primary “sectors”, which are major areas that influence the field you are exploring, and the factors each of them may be in.

Finally, you review which sector/factor combinations are unlikely to coexist. You review the surviving combinations for an overall plausibility review, and mark them as a Pass or Reject.

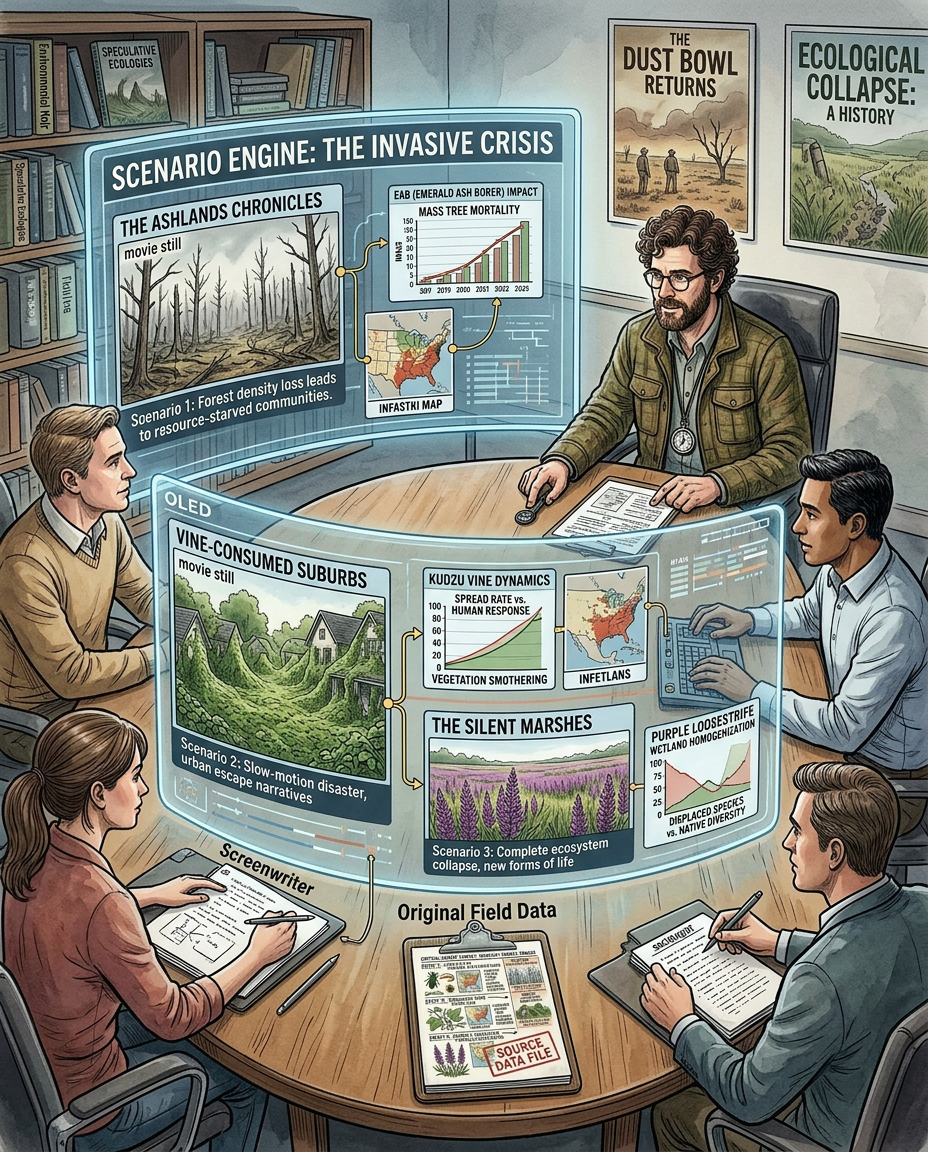

You then create narrative paths for the final survivors to connect, and brainstorm a detailed scenario writeup that provides color to the potential future you are describing. For those writeups, accuracy matters less than how vivid they are and how much they truly bring the scenario to life. Details will always differ greatly in a real future as opposed to a foresight exercise, but a more vivid story sweeps the imagination and gets your mind to engage, and the patterns are still the events you will be trying to understand.

With a little help from my friends

A very interesting question becomes where in the process we could use LLMs for greater effect. There are a few parts of the FAR process where AI could arguably add value:

- Identify narrative inconsistencies: similar to critiquing a document or e-mail, an LLM could automate reviewing sets of sectors/factors and provide input on whether these are likely to be compatible. With seven sectors and five factors each, a workshop would have to conduct 525 comparisons. So it’s easy to see how this would benefit from some scaling through AI;

- Develop scenario writeups: based on combinations of sectors/factors, LLMs have a strength in creating short narrative descriptions of step changes between different configurations along a scenario line.

Finally, there’s a very interesting experiment we could try, which I will refer to as the Full WOPR.

WOPR was the computer in the 1983 movie War Games, “War Operation Plan Response”. While running a rather unintended analysis of nuclear conflict between the United States and Soviet Union, it keeps testing out alternative scenarios until it comes to the final, correct solution that in nuclear war, there is no way to win except by not playing.

In our context, running a Full WOPR means giving the software some minimal information on the space we are investigating, and having it design and run through all scenarios it envisions, all the way through reducing the set of possible futures and writing out the narratives.

I experimented with a few self-hosted LLMs, as well as Gemini and Claude, and built functionality into the tool that allows us to use the LLMs to provide support on small, individual steps, or run the Full WOPR.

The AI support in the tool supports various models run locally through Ollama (I achieved the best results with GPT-OSS-120b and Magistral – which seem most attuned to identifying logical inconsistencies, with GPT-OSS-120b writing the best scenario narratives); as well as Claude and Gemini API keys.

LLMs do lack the historical perspective of a human expert — and in particular, I noticed they are very flexible at seeing how a particular configuration “could exist”. More pairs survive an AI-driven cross-consistency assessment than one conducted by human experts, so I had to build in additional checks to reduce the number of survivors. In practice, those checks fire often.

Sometimes, though, they also do not understand the exceptional history of cybersecurity. For instance, when running the Post Quantum Cryptography scenario, Claude felt it was inconsistent for there to be a scenario including “sudden quantum breakthrough in 2028” and “rapid adoption of PQC by 2032”. Its logic was that systems can simply not migrate that quickly once a threat materializes. This misses the history of the security industry, where faced with a rising threat, efforts have historically surged to meet the moment. At a much smaller scale, my own experience seeing a renegade team of engineers across the industry come together to fix CVE-2009-3555 came to mind as why I would not think of those as mutually exclusive.

This is a pattern worth watching for. LLMs are trained on the median of expert expectation, and can flag historically real but discontinuous outcomes as inconsistent.

Without a human expert, you can still come up with very interesting storylines and interesting indicators. When running the “full WOPR” in the FAR tool analyzing futures in the Quantum threat, it did successfully identify a significant decrease in needed qubits as a trigger point, and it came up with a few interesting scenarios. But many of those would best be kept in the realm of fiction without an expert driving the review.

While AI is a very valuable tool to help us be creative, it is best used as an aid, not a crutch, when doing structured foresight. Then it can truly shine. This is why for structured foresight, I think having scaffolding is very valuable, as opposed to asking the model directly to perform a full FAR analysis, which it can do, and does well. The defined structure allows you to have the LLM follow a specific way of processing its ideas, so you can steer and correct.

When you use the AI support in the prototype tool, you can do this by selectively editing its contributions. This locks the individual fields and allows you to have AI reassess its future without overriding the human contribution.

Pushing the LLM, too, through a structured approach allows you to derive most value as the final goal is growth of the analysts and the organization, not a true prediction.

Improving our choosing

In wicked problems, a direct solution today is often unthinkable. We don’t control much of the field, so to shape outcomes we need to understand its current state, what drives change within it, and where we have room to act. Wicked problems can become solvable over time, but right now there’s no clear path. So we have to be ready to “roll with the changes”, and make great decisions when opportunities arise.

At the beginning of this blog, I brought up the friction between data and instinct. As I’ve thought about this more, data sometimes only provides part of the picture. Situations can get so complex that churning through all of it available still gives us a partial picture of reality and potential outcomes. Knowing what potential paths may lie ahead can help us put the data we have to better use.

If we accept Rhyne’s definition of choosing: “a fleeting event, a pattern matching, that is intuitive in character and mostly subconscious, in which a person feels which of the available options seems to fit best within his own gestalt image of the relevant contextual field”, then FAR’s contribution is clear: it helps us build that contextual field, so our intuition has something richer to match against.

FAR can help us develop that view, the indicators and warnings for when it changes, and bring a new outlook on possibilities. I’ve found it an exciting way to brainstorm about what is to come, and hope this little research project provides value to others.